Deploying a Cloudify Manager Worker to Kubernetes

Cloudify manager worker helm chart ( Premium Version )

Description

It’s a helm chart for cloudify manager which:

- Is highly available, can be deployed with multiple replicas. ( available only when used NFS like Storage file system )

- Uses persistent volume to survive restarts/failures.

- Uses external DB (postgress), which may be deployed via public helm chart of Bitnami: https://github.com/bitnami/charts/tree/master/bitnami/postgresql

- Uses external Message Brokes (rabbitMQ), which may be deployed via public helm chart of Bitnami: https://github.com/bitnami/charts/tree/master/bitnami

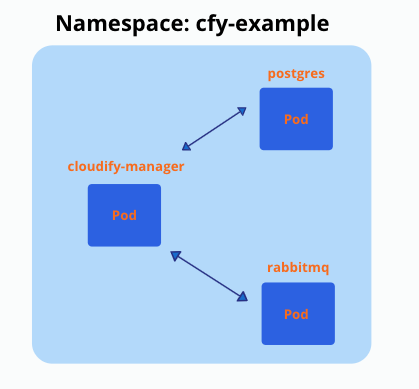

This is how the setup looks after it’s deployed to ‘cfy-example’ namespace (it’s possible to have multiple replicas (pods) of cloudify manager):

Cloudify-Helm GitHub repo

Prerequisites

- Docker installed

- Kubectl installed

- Helm installed

- Running Kubernetes cluster (View differences between cloud providers)

- Sufficient Kubernetes node Minimum Requirements

- Cloudify Premium valid license (for Premium version)

How to create and deploy such a setup?

You need to deploy DB and Message Broker before deploying Cloudify manager worker

Generate certificates and add as secret to k8s

SSL certificate must be provided, to secure communications between cloudify manager and posrgress/rabbitmq

ca.crt (to sign other certificates)

tls.key

tls.crt

Option 1: Create certificates using the community cloudify manager docker container

$ docker pull cloudifyplatform/community-cloudify-manager-aio:latest

$ docker run --name cfy_manager_local -d --restart unless-stopped --tmpfs /run --tmpfs /run/lock cloudifyplatform/community-cloudify-manager-aioExec to the manager and generate certificates

$ docker exec -it cfy_manager_local bash

# NAMESPACE to which cloudify-manager deployed, must be changed accordingly

$ cfy_manager generate-test-cert -s 'cloudify-manager-worker.NAMESPACE.svc.cluster.local,rabbitmq.NAMESPACE.svc.cluster.local,postgres-postgresql.NAMESPACE.svc.cluster.local'You can change the name of the created certificates (inside the container):

$ cd /root/.cloudify-test-ca

$ mv cloudify-manager-worker.helm-update.svc.cluster.local.crt tls.crt

$ mv cloudify-manager-worker.helm-update.svc.cluster.local.key ./tls.keyExit the container and copy the certificates from the container to your working environment:

$ docker cp cfy_manager_local:/root/.cloudify-test-ca/. ./Create secret in k8s from certificates:

$ kubectl create secret generic cfy-certs --from-file=./tls.crt --from-file=./tls.key --from-file=./ca.crt -n NAMESPACEOption 2: Use cert-manager component installed to kubernetes cluster

You need to deploy those manifests, which will generate cfy-certs secret eventually, you need to change NAMESPACE to your namespace before. You can find this manifest in external folder - cert-issuer.yaml

apiVersion: cert-manager.io/v1alpha2

kind: Issuer

metadata:

name: selfsigned-issuer

spec:

selfSigned: {}

---

apiVersion: cert-manager.io/v1alpha2

kind: Certificate

metadata:

name: cfy-ca

spec:

secretName: cfy-ca-tls

commonName: NAMESPACE.svc.cluster.local

usages:

- server auth

- client auth

isCA: true

issuerRef:

name: selfsigned-issuer

---

apiVersion: cert-manager.io/v1alpha2

kind: Issuer

metadata:

name: cfy-ca-issuer

spec:

ca:

secretName: cfy-ca-tls

---

apiVersion: cert-manager.io/v1alpha2

kind: Certificate

metadata:

name: cfy-cert

spec:

secretName: cfy-certs

isCA: false

usages:

- server auth

- client auth

dnsNames:

- "postgres-postgresql.NAMESPACE.svc.cluster.local"

- "rabbitmq.NAMESPACE.svc.cluster.local"

- "cloudify-manager-worker.NAMESPACE.svc.cluster.local"

- "postgres-postgresql"

- "rabbitmq"

- "cloudify-manager-worker"

issuerRef:

name: cfy-ca-issuerCreate a local copy of the cert-issuer.yaml and apply it to the namespace:

$ kubectl apply -f ./cert-issuer.yaml -n NAMESPACEClone cloudify-helm repo

This step is necessary because the following steps will require files from this directory * In case you don’t have Git installed - https://github.com/git-guides/install-git

$ git clone https://github.com/cloudify-cosmo/cloudify-helm.git && cd cloudify-helmInstall PostgreSQL(bitnami) to Kubernetes cluster with helm

First we need to add the Bitnami helm repository - for PostgreSQL and RabbitMQ charts

$ helm repo add bitnami https://charts.bitnami.com/bitnamiYou can find example of PostgreSQL values.yaml in external/postgres-values.yaml

Use certificate we created as k8s secret: ‘cfy-certs’

volumePermissions.enabled=true

tls:

enabled: true

preferServerCiphers: true

certificatesSecret: 'cfy-certs'

certFilename: 'tls.crt'

certKeyFilename: 'tls.key'Install postgresql with postgres-values.yaml with pinned version

$ helm install postgres bitnami/postgresql -f ./cloudify-manager-worker/external/postgres-values.yaml --version 10.15.0 -n NAMESPACEInstall RabbitMQ(bitnami) to Kubernetes cluster with helm

Use certificate we created as k8s secret: ‘cfy-certs’

tls:

enabled: true

existingSecret: cfy-certs

failIfNoPeerCert: false

sslOptionsVerify: verify_peer

caCertificate: |-

serverCertificate: |-

serverKey: |-Run management console on 15671 port with SSL (cloudify manager talks to management console via SSL):

add to rabbitmq-values.yaml

configuration: |-

management.ssl.port = 15671

management.ssl.cacertfile = /opt/bitnami/rabbitmq/certs/ca_certificate.pem

management.ssl.certfile = /opt/bitnami/rabbitmq/certs/server_certificate.pem

management.ssl.keyfile = /opt/bitnami/rabbitmq/certs/server_key.pem

extraPorts:

- name: manager-ssl

port: 15671

targetPort: 15671Install rabbitmq with rabbitmq-values.yaml with pinned version

$ helm install rabbitmq bitnami/rabbitmq -f ./cloudify-manager-worker/external/rabbitmq-values.yaml --version 8.29.0 -n NAMESPACEInstall cloudify manager worker

Create configMap with premium license - required if using Cloudify premium version

Create license.yaml file and populate it with license data * American/British English accepted, but must be alligned across all ‘license/licence’ strings (values/configMaps)

apiVersion: v1

kind: ConfigMap

metadata:

name: cfy-license

namespace: <NAMESPACE>

data:

cfy_license.yaml: |

license:

capabilities: null

cloudify_version: null

customer_id: <CUSTOMER_ID>

expiration_date: 12/31/2021

license_edition: Premium

trial: false

signature: !!binary |

<LICENSE_KEY>Enable license in values file * License name (metadata.name) must match the secretName in the values file

license:

secretName: cfy-licenseApply created config map:

$ kubectl apply -f license.yamlAdd the cloudify-helm repo

Add the cloudify-helm repo or upgrade it

$ helm repo add cloudify-helm https://cloudify-cosmo.github.io/cloudify-helmor

$ helm repo update cloudify-helmIf you want to customize the values it’s recommended to do so before installing the chart - see configuration options below, and either way make sure to review the values file.

(optional) Ensure UI access to the manager upon installation

[OPTION 1]

Use ingress-controller (e.g. NGINX Ingress Controller - https://kubernetes.github.io/ingress-nginx/deploy/)

HTTP * Modify Ingress section accordingly (see example):

ingress:

enabled: true

host: cloudify-manager.DOMAIN

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/proxy-body-size: 50m # use this annotation to allow upload of resources up to 50mb (e.g. plugins)

# cert-manager.io/cluster-issuer: "letsencrypt-prod" # use this annotation to utilize an installed cert-manager

tls:

enabled: false

secretName: cfy-secret-nameHTTPS - Pre-applied SSL Cert * Create SSL secret with tls certificate

apiVersion: v1

kind: Secret

metadata:

name: cfy-secret-name

namespace: NAMESPACE

data:

tls.crt: SSL_TLS_CRT

tls.key: SSL_TLS_KEY

type: kubernetes.io/tlsModify Ingress section accordingly (see example):

ingress: enabled: true host: cloudify-manager.DOMAIN annotations: kubernetes.io/ingress.class: nginx nginx.ingress.kubernetes.io/proxy-body-size: 50m # use this annotation to allow upload of resources up to 50mb (e.g. plugins) # cert-manager.io/cluster-issuer: "letsencrypt-prod" # use this annotation to utilize an installed cert-manager tls: enabled: true secretName: cfy-secret-nameHTTPS - Certificate Manager

Use certificate manager (e.g. Let’s Encrypt via cert-manager - https://cert-manager.io/docs/)

Modify Ingress section accordingly (see example):

ingress: enabled: true host: cloudify-manager.DOMAIN annotations: kubernetes.io/ingress.class: nginx nginx.ingress.kubernetes.io/proxy-body-size: 50m # use this annotation to allow upload of resources up to 50mb (e.g. plugins) cert-manager.io/cluster-issuer: "<cluster-issuer-name>" # use this annotation to utilize an installed cert-manager tls: enabled: true secretName: cfy-secret-nameHTTP/HTTPS options will expose Cloudify Manager UI on a URL matching the

hostvalue

[OPTION 2]

Skip Ingress and expose the Cloudify Manager service using LoadBalancer

HTTP

For this method you need to edit the Service section to use the right type:

service:

host: cloudify-manager-worker

type: LoadBalancer

name: cloudify-manager-worker

http:

port: 80

https:

port: 443

internal_rest:

port: 53333That will create a load balancer depending on your K8S infrastructure (e.g. EKS will create a Classic Load Balancer) To get the hostname of the load balancer run:

kubectl describe svc/cloudify-manager-worker -n NAMESPACE | grep IngressThe value of the ingress will be the UI URL of the Cloudify Manager.

HTTPS * To secure the site with SSL you can update the load balancer configuration to utilize an SSL Certificate * To have a fixed URL, you can utilize a DNS service to route the LB URL (hostname) to the URL you want

After values are verified, install the manager worker chart

$ helm install cloudify-manager-worker cloudify-helm/cloudify-manager-worker -f ./cloudify-manager-worker/values.yaml -n NAMESPACEConfiguration options of cloudify-manager-worker values.yaml

Edit the values file in ./cloudify-manager-worker/values.yaml according to your preferences:

Upgrade cloudify manager worker

To upgrade cloudify manager use ‘helm upgrade’.

For example to change to newer version (e.g. from 6.2.0 to 6.3.0 in this example),

Change image version in values.yaml:

Before:

image:

repository: cloudifyplatform/premium-cloudify-manager-worker

tag: 6.2.0After:

image:

repository: cloudifyplatform/premium-cloudify-manager-worker

tag: 6.3.0Run ‘helm upgrade’

$ helm upgrade cloudify-manager-worker cloudify-helm/cloudify-manager-worker -f ./cloudify-manager-worker/values.yaml -n NAMESPACEIf DB schema was changed in newer version, needed migration will be running first on DB, then application will be restarted during upgrade - be patient, because it may take a couple of minutes.

Image:

image:

repository: "cloudifyplatform/premium-cloudify-manager-worker"

tag: "6.3.0"

pullPolicy: IfNotPresentDB - postgreSQL:

db:

host: postgres-postgresql

cloudify_db_name: 'cloudify_db'

cloudify_username: 'cloudify'

cloudify_password: 'cloudify'

server_db_name: 'postgres'

server_username: 'postgres'

server_password: 'cfy_test_pass'Message Broker - rabbitmq:

queue:

host: rabbitmq

username: 'cfy_user'

password: 'cfy_test_pass'Service:

See customization example above

service:

host: cloudify-manager-worker

type: ClusterIP

name: cloudify-manager-worker

http:

port: 80

https:

port: 443

internal_rest:

port: 53333node selector - select on which nodes cloudify manager may run:

nodeSelector: {}

# nodeSelector:

# nodeType: onDemand Secret name of certificate

secret:

name: cfy-certsresources requests and limits:

resources:

requests:

memory: 0.5Gi

cpu: 0.5Persistent volume size for EBS/EFS:

If using multiple replicas (High availability), NFS like Storage like EFS must be used. For more details see links to different cloud providers here

volume:

storage_class: 'efs'

access_mode: 'ReadWriteMany'

size: "3Gi"If using one replicas, you can use EBS (gp2) for example, gp2 is default:

volume:

storage_class: 'gp2'

access_mode: 'ReadWriteOnce'

size: "3Gi"readiness probe may be enabled/disabled

readinessProbe:

enabled: true

port: 80

path: /console

initialDelaySeconds: 10Config

You can delay start of cfy manager / install all plugins / disable security (not recommended)…

config:

start_delay: 0

# Multiple replicas works only with EFS(NFS) volume

replicas: 1

install_plugins: false

cli_local_profile_host_name: localhost

security:

ssl_enabled: false

admin_password: admin

tls_cert_path: /mnt/cloudify-data/ssl/tls.crt

tls_key_path: /mnt/cloudify-data/ssl/tls.key

ca_cert_path: /mnt/cloudify-data/ssl/ca.crtIngress

You may enable ingress-nginx and generate automatically cert if you have ingress-nginx / cert-manager installed (e.g. using nginx with existing ssl secret) - See above for more details

ingress:

enabled: false

host: cloudify-manager.app.cloudify.co

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/proxy-body-size: 50m # use this annotation to allow upload of resources up to 50mb (e.g. plugins)

# cert-manager.io/cluster-issuer: "letsencrypt-prod" # use this annotation to utilize an installed cert-manager

tls:

enabled: false

secretName: cfy-secret-nameTroubleshoot

Some common use cases:

License is not uploaded correctly upon installation

This might happen if the English convention of licence/license is not alligned across the values (name of the value and its value), or across the license/licence configMap.

Also, the StatefulSet accepts a license/licence configMap with the data value of this syntax cfy_license.yaml (according to the chosen English convention)

After ensuring the above, try to reinstall the worker chart

- Workaround for this issue would be to manually upload the license after the manager installation through the UI after logging in or via the CLI.

Cloudify Manager installation succeded but I can’t reach the UI

Please see above

If you already installed the chart, update the values accordingly and run:

$ helm upgrade cloudify-manager-worker cloudify-helm/cloudify-manager-worker -f <path-to-values.yaml-file> -n NAMESPACEI had to reinstall the worker chart and now it fails on installation

This might happen due to inter-communications between the components in the different pods, a work around for that would be to delete the postgresql (has a PersistentVolume) and the rabbitmq pods, which will trigger a restart for them.

$ kubectl delete pod postgres-postgresql-0 -n NAMESPACE

$ kubectl delete pod rabbitmq-0 -n NAMESPACEThen try reinstalling the worker chart.

Can’t find the help you need here?

Feel free to open an issue in the helm chart GitHub page, or contact us through our website.

Uninstallation

As the whole setup is built from mainly 3 helm charts, you simply need to uninstall them.

$ helm uninstall cloudify-manager-worker postgres rabbitmq -n NAMESPACETo clean the supporting files:

$ kubectl delete secret cfy-certs -n NAMESPACE

$ kubectl delete configmap cfy-license -n NAMESPACE